Speak for yourself, because that didn't surprise me at all. > I’d be surprised that a YouTube video of white noise would be flagged for a copyright violation, and yet here we are. And, again, you'll be doing fuzzy matches on these hashes, so you're going to pick up those unrelated things even more so than when you just have hash collisions. A lot of unrelated things look similar to one another when you squint, and a lot of unrelated things are going to have similar perceptual hashes.

In general, if two images kind of look like one another when you squint, they're going to have similar perceptual hashes. > Photos of your own kids won't trigger it, nude photos of adults won't trigger it And you have to look for fuzzy matches otherwise slight modifications to illegal images would bypass the detection system. What are you talking about? Ask anyone who has worked in this space: false positives are abound, especially when you're looking for fuzzy matches. > It's true that they're not cryptographic hashes, but the false positive rate is vanishingly small.

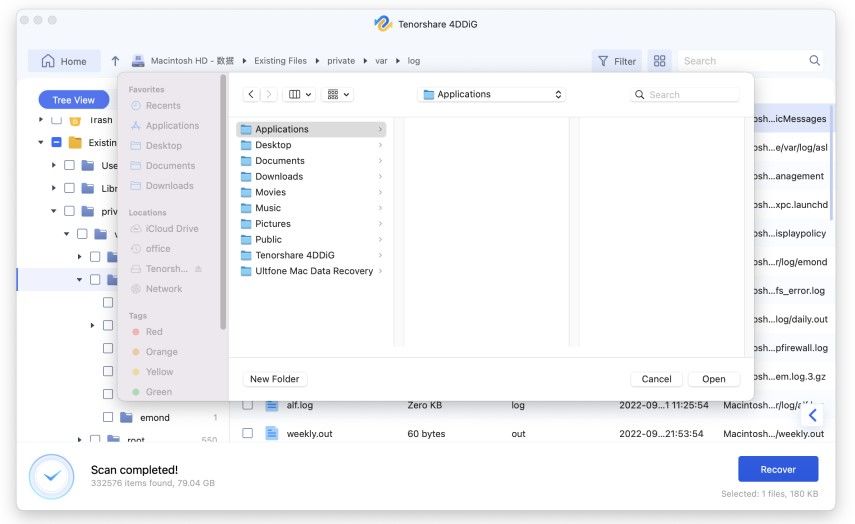

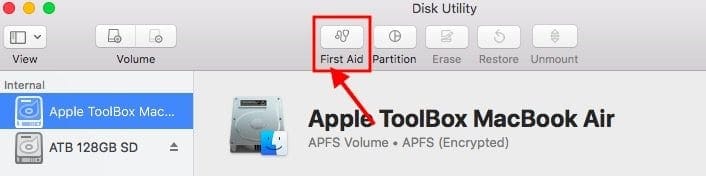

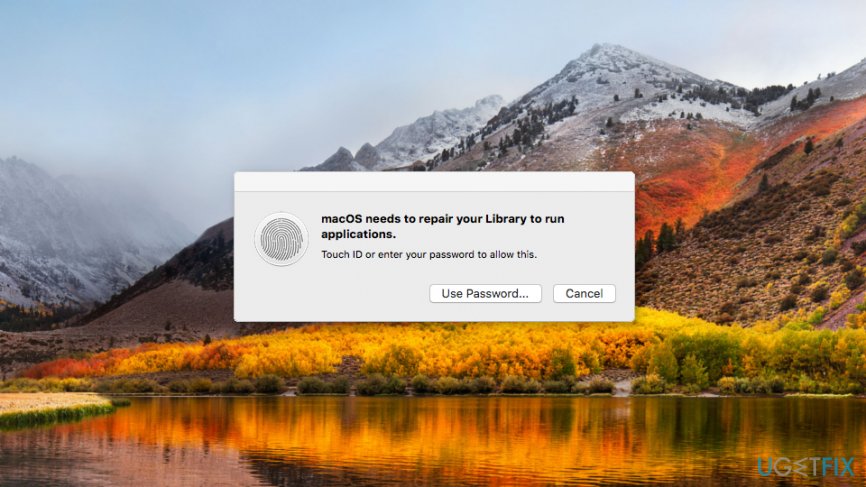

#OSX 10.12.3 REPAIRING PHOTOS LIBRARY STUCK SKIN#

(Might have to sell/mortgage your house, or your mother's house).īecause you will probably make the news, and the false accusation will be on the internet forever.īecause you will probably get the living shit beat out of you by the arresting police.īecause you will probably get the living shit beat out of you by your fellow inmates.īecause the incompetent people who charged you can't be sued due to qualified immunity, so no skin off their back.īecause your family will have a lot of stress on it and this will affect your spouse, children, siblings and parents in a very negative way, probably for the rest of their lives.īecause you've lost your job in the process.īecause you'll probably never get a decent job again.īecause you'll probably need a lot of psychological counseling assuming you survive this process. We can only hope that they won't change their minds.īecause we know that the US justice system is horribly corrupt and inept and don't care if they get it right, as long as they get a win.īecause a case like this, you'll be paying a lot of bail just for the privilege of defending yourself properly.

#OSX 10.12.3 REPAIRING PHOTOS LIBRARY STUCK FREE#

We are free to disbelieve them, but that's what they've stated. There isn't any evidence yet that Apple will do such a thing, or that they've already done it in the past. But Apple has stated that they have no intention of expanding the system's scope to follow those laws. However, it becomes a completely separate issue if the false positives are "coincidentally" used to persecute marginalized groups in other countries where the same set of laws don't apply. The courts would still need admissible evidence, and I don't believe that only having a perceptual hash and a set of legally photographed images clears that bar. The issue in that case is the violation of the innocent person's privacy, not that they have a risk of being falsely convicted. Why would they have to fear being convicted if they don't actually hold any incriminating evidence? At most, that would become evidence against using perceptual hashing in future court cases.

Is there even a single verifiable report of such a false positive? I feel that with the amount of attention brought to this issue, if there was such a report then it probably would have been brought up by now.īeyond that, assume that a false positive occurs and the innocent person is taken to court. And the version of PhotoDNA from ten years ago would probably have been inferior to the version in place now. Then where are the news reports or articles of these false positives that would have shown up within the past decade? That's how long these companies have been using PhotoDNA on the server side. "The threshold is selected to provide an extremely low (1 in 1 trillion) probability of incorrectly flagging a given account." of. Photos of your own kids won't trigger it, nude photos of adults won't trigger it only photos of already known CSAM content will trigger (and that too, Apple requires a specific threshold of matches before a report is triggered).

The fact of the matter is that unless you possess a photo that exists in the NCMEC database, your photos simply will not be flagged to Apple. Have you heard of some massive issue with false positives? Google and Facebook and Microsoft are all using PhotoDNA (or equivalent perceptual hashing schemes) right now. Apple claims it's 1 in a trillion, but suppose that you don't believe them. It's true that they're not cryptographic hashes, but the false positive rate is vanishingly small. Lots of people responding to this seem to not understand how perceptual hashing / PhotoDNA works.